It’s increasingly necessary for businesses to make immediate decisions. More importantly, it’s crucial these decisions are backed up with data. That’s where real-time analytics can help. Whether you’re a SaaS company looking to release a new feature quickly, or own a retail shop trying to better manage inventory, these insights can empower businesses to assess and act on data quickly to make better decisions. As a result, you’ll enjoy empowered decision-making, know how to respond to the latest trends, and boost operational efficiency.

We’re here to walk you through everything you need to know about real-time analytics. Whether you want to learn more about the benefits of real-time analytics or dive deeper into the most significant characteristics of a real-time analytics system, we’ll ensure you have a robust understanding of how real-time analytics move your business forward.

What is real-time analytics?

So, what is real time analytics? And more importantly, how does real-time analytics work?

Real-time analytics refers to pulling data from different sources in real-time. Then, the data is analyzed and transformed into a format that’s digestible for target users, enabling them to draw conclusions or immediately garner insights once the data is entered into a company’s system. Users can access this data on a dashboard, report, or another medium.

Moreover, there are two forms of real-time analytics. These include:

On-demand real-time analytics

With on-demand real-time analytics, users send a request, such as with an SQL query, to deliver the analytics outcome. It relies on fresh data, but queries are run on an as-needed basis.

The requesting user varies, and can be a data analyst or another team member within the organization who wants to gain insight into business activity. For instance, a marketing manager can leverage on-demand real-time analytics to identify how users on social media react to an online advertisement in real time.

Continuous real-time analytics

On the contrary, continuous real-time analytics takes a more proactive approach. It delivers analytics continuously in real time without requiring a user to make a request. You can view your data on a dashboard via charts or other visuals, so users can gain insight into what’s occurring down to the second.

One potential use case for continuous real-time analytics is within the cybersecurity industry. For instance, continuous real-time analytics can be leveraged to analyze streams of network security data flowing into an organization’s network. This makes threat detection a possibility.

In addition to the main types of real-time analytics, streaming analytics also plays a crucial role in processing data as it flows in real-time. Let’s dive deeper into streaming analytics now.

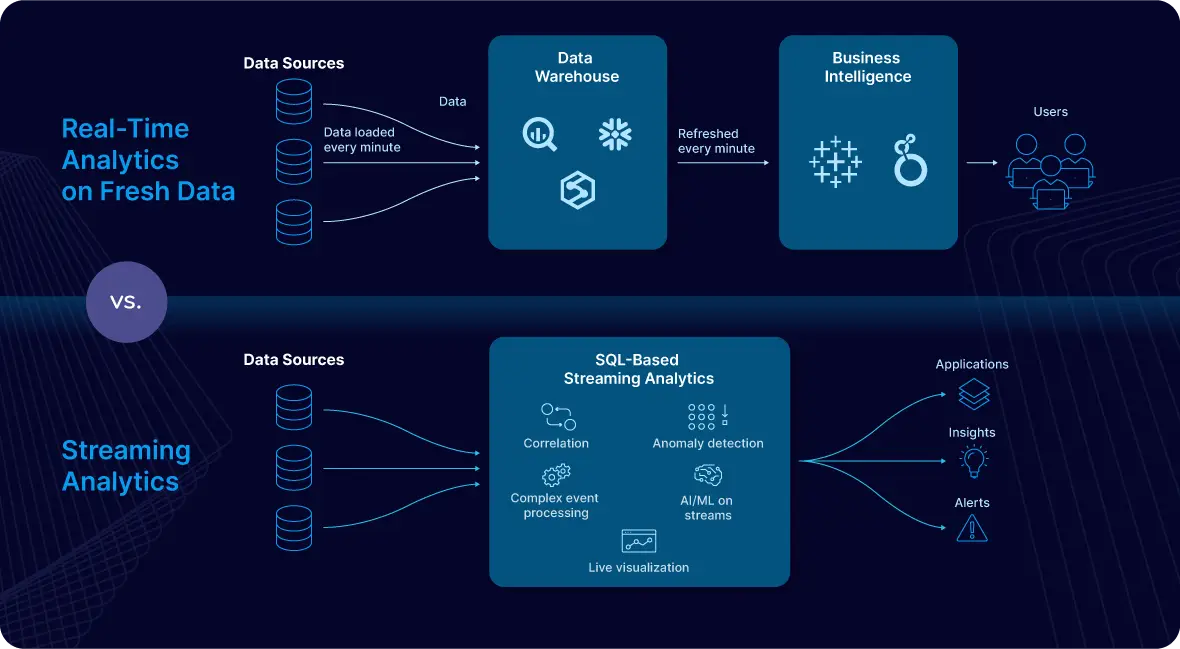

What’s the difference between real-time analytics and streaming analytics?

Streaming analytics focuses on analyzing data in motion, unlike traditional analytics, which deals with data stored in databases or data warehouses. Streams of data are continuously queried with Streaming SQL, enabling correlation, anomaly detection, complex event processing, artificial intelligence/machine learning, and live visualization. Because of this, streaming analytics is especially impactful for fraud detection, log analysis, and sensor data processing use cases.

How does real-time analytics work?

To fully understand the impact of real-time analytics processing, it’s necessary to understand how it works.

1. Collect data in real time

Every organization can leverage valuable real-time data. What exactly that looks like varies depending on your industry, but some examples include:

- Enterprise resource management (ERP) data: Analytical or transactional data

- Website application data: Top source for traffic, bounce rate, or number of daily visitors

- Customer relationship management (CRM) data: General interest, number of purchases, or customer’s personal details

- Support system data: Customer’s ticket type or satisfaction level

Consider your business operations to decide the type of data that’s most impactful for your business. You’ll also need to have an efficient way of collecting it. For instance, say you work in a manufacturing plant and are looking to use real-time analytics to find faults in your machinery. You can use machine sensors to collect data and analyze it in real time to deduct if there are any signs of failure.

For collection of data, it’s imperative you have a real-time ingestion tool that can reliably collect data from your sources.

2. Combine data from various sources

Typically, you’ll need data from multiple sources to gain a complete analysis. If you’re looking to analyze customer data, for instance, you’ll need to get it from operational systems of sales, marketing, and customer support. Only with all of those facets can you leverage the information you have to determine how to improve customer experience.

To achieve this, combine data from the sum of your sources. For this purpose, you can use ETL (extract, transform, and load) tools or build a custom data pipeline of your own and send the aggregated data to a target system, such as a data warehouse.

3. Extract insights by analyzing data

Finally, your team will extract actionable insights. To do this, use statistical methods and data visualizations to analyze data by identifying underlying patterns or correlations in the data. For example, you can use clustering to divide the data points into different groups based on their features and common properties. You can also use a model to make predictions based on the available data, making it easier for users to understand these insights.

Now that you have an answer to the question, “how does real time analytics work?” Let’s discuss the difference between batch and real-time processing.

Batch processing vs. real-time processing: What’s the difference?

Real-time analytics is made possible by the way the data is processed. To understand this, it’s important to know the difference between batch and real-time processing.

Batch Processing

In data analytics, batch processing involves first storing large amounts of data for a period and then analyzing it as needed. This method is ideal when analyzing large aggregates or when waiting for results over hours or days is acceptable. For example, a payroll system processes salary data at the end of the month using batch processing.

“Sometimes there’s so much data that old batch processing (late at night once a day or once a week) just doesn’t have time to move all data and hence the only way to do it is trickle feed data via CDC,” says Dmitriy Rudakov, Director of Solution Architecture at Striim.

Real-time Processing

With real-time processing, data is analyzed immediately as it enters the system. Real-time analytics is crucial for scenarios where quick insights are needed. Examples include flight control systems and ATM machines, where events must be generated, processed, and analyzed swiftly.

“Real-time analytics gives businesses an immediate understanding of their operations, customer behavior, and market conditions, allowing them to avoid the delays that come with traditional reporting,” says Simson Chow, Sr. Cloud Solutions Architect at Striim. “This access to information is necessary because it enables businesses to react effectively and quickly, which improves their ability to take advantage of opportunities and address problems as they arise.”

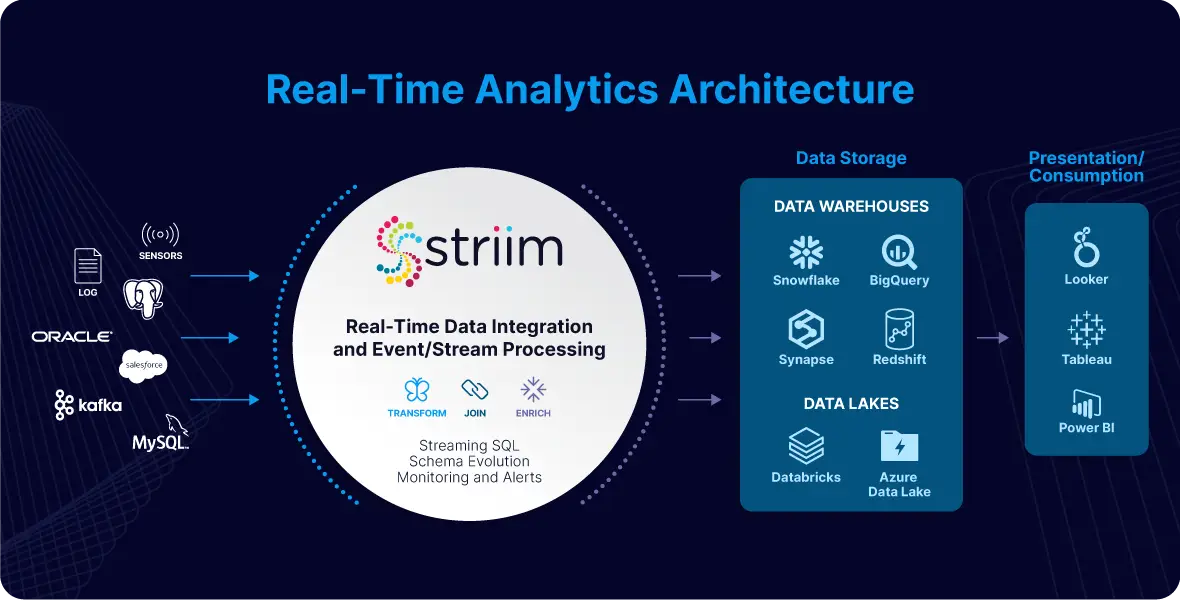

Real-Time Analytics Architecture

When implementing real-time analytics, you’ll need a different architecture and approach than you would with traditional batch-based data analytics. The streaming and processing of large volumes of data will also require a unique set of technologies.

With real-time analytics, raw source data rarely is what you want to be delivered to your target systems. More often than not, you need a data pipeline that begins with data integration and then enables you to do several things to the data in-flight before delivery to the target. This approach ensures that the data is cleaned, enriched, and formatted according to your needs, enhancing its quality and usability for more accurate and actionable insights.

Data integration

The data integration layer is the backbone of any analytics architecture, as downstream reporting and analytics systems rely on consistent and accessible data. Because of this, it provides capabilities for continuously ingesting data of varying formats and velocity from either external sources or existing cloud storage.

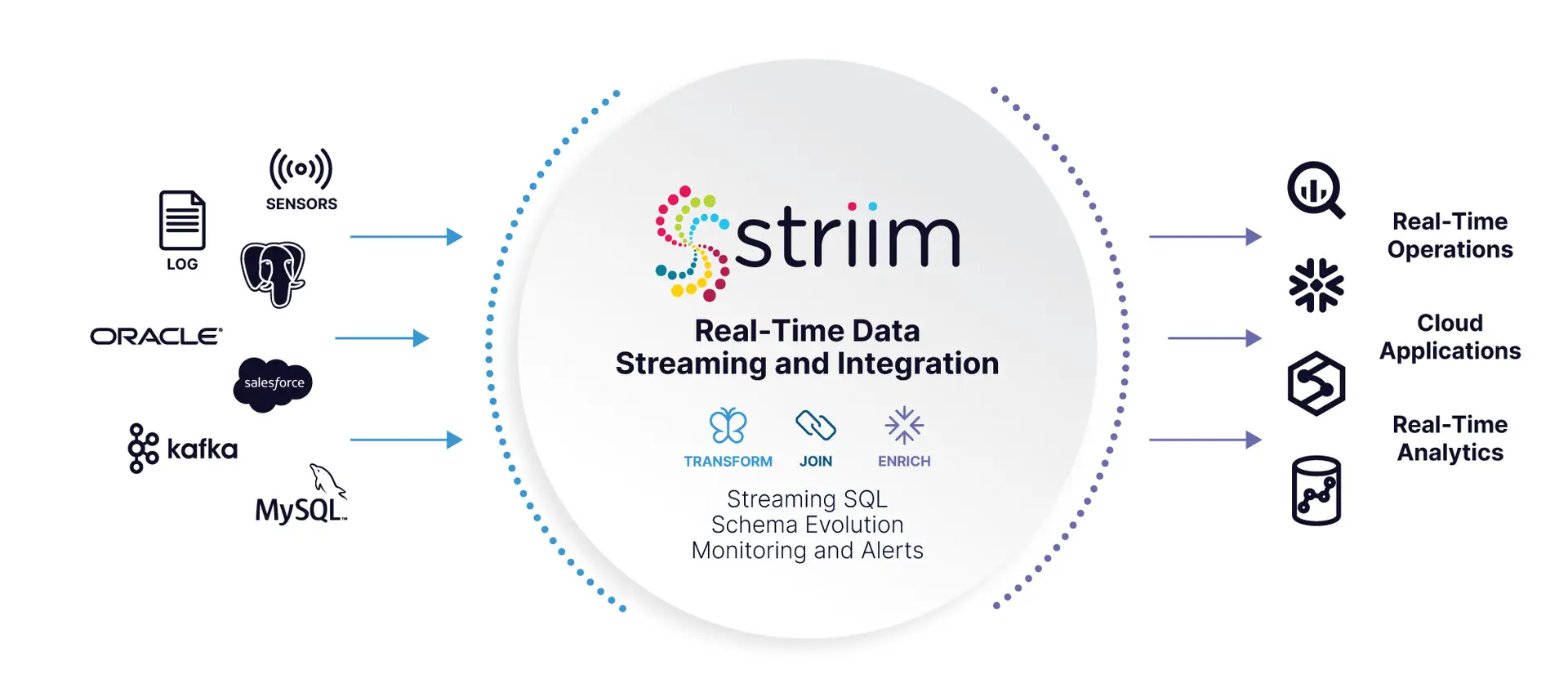

It’s crucial that the integration channel can handle large volumes of data from a variety of sources with minimal impact on source systems and sub-second latency. This layer leverages data integration platforms like Striim to connect to various data sources, ingest streaming data, and deliver it to various targets.

For instance, consider how Striim enables the constant, continuous movement of unstructured, semi-structured, and structured data – extracting it from a wide variety of sources such as databases, log files, sensors, and message queues, and delivering it in real-time to targets such as Big Data, Cloud, Transactional Databases, Files, and Messaging Systems for immediate processing and usage.

Event/stream processing

The event processing layer provides the components necessary for handling data as it is ingested. Data coming into the system in real-time are often referred to as streams or events because each data point describes something that has occurred in a given period. These events typically require cleaning, enrichment, processing, and transformation in flight before they can be stored or leveraged to provide data.

Therefore, another essential component for real-time data analytics is the infrastructure to handle real-time event processing.

Event/stream processing with Striim

Some data integration platforms, like Striim, perform in-flight data processing. This includes filtering, transformations, aggregations, masking, and enrichment of streaming data. These platforms deliver processed data with sub-second latency to various environments, whether in the cloud or on-premises.

Additionally, Striim can deliver data to advanced stream processing platforms such as Apache Spark and Apache Flink. These platforms can handle and process large volumes of data while applying sophisticated business logic.

Data storage

A crucial element of real-time analytics infrastructure is a scalable, durable, and highly available storage service to handle the large volumes of data needed for various analytics use cases. The most common storage architectures for big data include data warehouses and lakes. Organizations seeking a mature, structured data solution that focuses on business intelligence and data analytics use cases may consider a data warehouse. Data lakes, on the contrary, are suitable for enterprises that want a flexible, low-cost big data solution to power machine learning and data science workloads on unstructured data.

It’s rare for all the data required for real-time analytics to be contained within the incoming stream. Applications deployed to devices or sensors are generally built to be very lightweight and intentionally designed to produce minimal network traffic. Therefore, the data store should be able to support data aggregations and joins for different data sources — and must be able to cater to a variety of data formats.

Presentation/consumption

At the core of a real-time analytics solution is a presentation layer to showcase the processed data in the data pipeline. When designing a real-time architecture, keep this step at the forefront as it’s ultimately the end goal of the real-time analytics pipeline.

This layer provides analytics across the business for all users through purpose-built analytics tools that support analysis methodologies such as SQL, batch analytics, reporting dashboards, and machine learning. This layer is essentially responsible for:

- Providing visualization of large volumes of data in real time

- Directly querying data from big stores, like data lakes and warehouses

- Turning data into actionable insights using machine learning models that help businesses deliver quality brand experiences

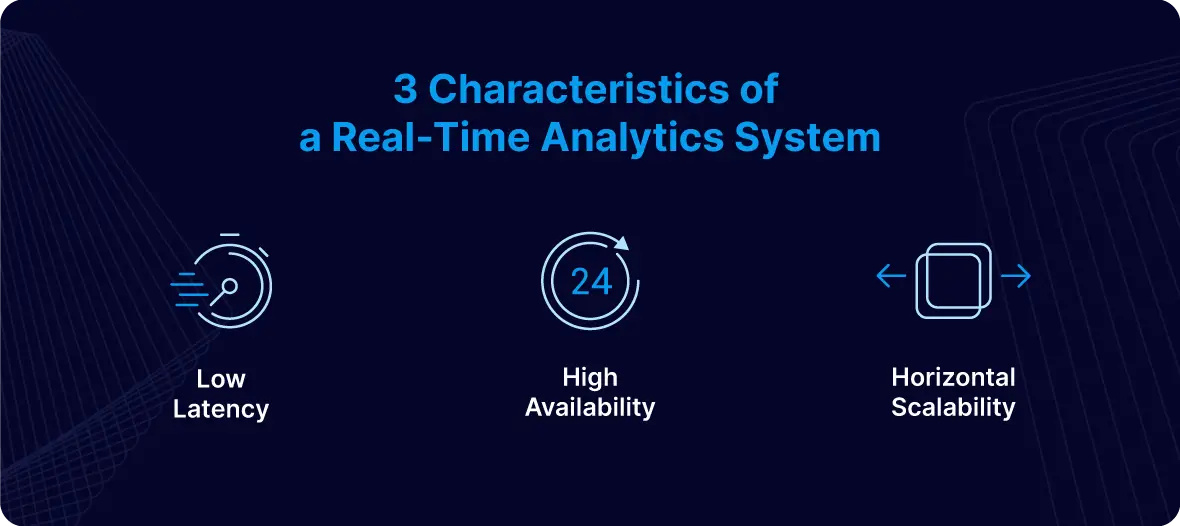

What are Key Characteristics of a Real-Time Analytics System?

To verify that a system supports real-time analytics, it must have specific characteristics. Those characteristics include:

Low latency

In a real-time analytics system, latency refers to the time between when an event arrives in the system and when it is processed. This includes both computer processing latency and network latency. To ensure rapid data analysis, the system must operate with low latency. “Businesses can access the most accurate data since the system responds quickly and has minimal latency,” says Chow.

High availability

Availability refers to a real-time analytics system’s ability to perform its function when needed. High availability is crucial because without it:

- The system cannot instantly process data

- The system will find it hard to store data or use a buffer for later processing, particularly with high-velocity streams

Chow adds, “High availability guarantees uninterrupted operation.”

Horizontal scalability

Finally, a key characteristic of a successful real-time analytics system is horizontal scalability. This means the system can increase capacity or enhance performance by adding more servers to the existing pool. In cases where you cannot control the rate of data ingress, horizontal scalability becomes crucial, as it allows you to adjust the system’s size to handle incoming data effectively. “When the business adds more servers, the horizontal scalability feature of the system increases its flexibility even more by enabling it to handle more data and users,” shares Chow. “When combined, these characteristics ensure the system’s scalability, speed, and reliability as the business grows.”

According to Rudakov, these three capabilities are crucial for several reasons. “[Low latency is important] because in order to move data for reasons above the operator needs data to get triggered ASAP with lowest latency possible,” he says. “Secondly, the system needs to be redundant with recovery support so that if it fails it comes back quickly and has no data loss. Finally, if the data is not moving fast enough, the operator needs to be able to easily scale the data moving system, i.e. add parallel components into the pipeline and add nodes into the cluster.”

Rudakov adds that’s exactly why Striim is the right choice for a real-time analytics platform. “Striim provides all real time platform necessary elements described above: low latency pipeline controls such as CDC readers to read data in real time from database logs, recovery, batch policies, ability to run pipelines in parallel and finally multi-node cluster to support HA and scalability,” he says. “Additionally, it supports an easy drag and drop interface to create pipelines in a simple SQL based language (TQL).”

Benefits of Real-Time Analytics

There are countless benefits of real-time analytics. Some include:

To Optimize the Customer Experience

According to an IBM/NRF report, post-pandemic customer expectations regarding online shopping have evolved considerably. Now, consumers seek hybrid services that can help them move seamlessly from one channel to another, such as buy online, pickup in-store (BOPIS), or order online and get it delivered to their doorstep. According to the IBM/NRF report, one in four consumers wants to shop the hybrid way.

In order to enable this, however, retailers must access real-time analytics to move data from their supply chain to the relevant departments. Organizations today need to monitor their rapidly changing contexts 24/7. They need to process and analyze cross-channel data immediately. Just consider how Macy’s leveraged Striim to improve operational efficiency and create a seamless customer experience. “In many scenarios, businesses need to act in real time and if they don’t their revenue and customers get impacted,” says Rudakov.

Real-time analytics also enhances personalization. It enables brands to deliver tailored content to consumers based on their actions on channels like websites, mobile apps, SMS, or email—instantly.

“Having access to real-time data allows a retail store to quickly respond to changes in demand for a certain item by adjusting inventory levels, launching focused marketing campaigns, or adjusting pricing techniques,” says Chow. “Similarly, companies may move quickly to address potential problems—like a drop in website performance or a decrease in consumer satisfaction—and mitigate negative consequences before they escalate.”

To Stay Proactive and Act Quickly

Another way businesses can leverage real-time analytics is to stay proactive and act quickly in case of an anomaly, such as with fraud detection. Unfortunately, fraud is a reality for innumerable businesses, regardless of size. However, real-time analytics can help organizations identify theft, fraud, and other types of malicious activities. Because of this, leveraging real-time analytics is a powerful way to ensure your business is staying proactive and able to move quickly if something goes wrong.

This is especially important as these malicious online activities have seen a surge over the past few years. Consumers lost more than $10 billion to fraud in 2023, according to the Federal Trade Commission.

“At some point a major credit card company used our platform to read network access logs and call an ML model to detect hacker attempts on their network,” shares Rudakov.

For example, companies can use real-time analytics by combining it with machine learning and Markov modeling. Markov modeling is used to identify unusual patterns and make predictions on the likelihood of a transaction being fraudulent. If a transaction shows signs of unusual behavior, it then gets flagged.

To Improve Decision-Making

Using up-to-date information allows organizations to know what they are doing well and improve. Conversely, it allows them to identify pitfalls and determine how to improve.

For instance, if a piece of machinery isn’t working optimally in a manufacturing plant, real-time analytics can collect this data from sensors and generate data-driven insights that can help technicians resolve it.

Real-time Use Cases in Different Industries

The benefits of real-time analytics vary just as the use cases do. Let’s walk through several use cases of real-time analytics platforms.

Supply chain

Real-time analytics in supply chain management can enable better decision-making. Managers can view real-time dashboard data to oversee the supply chain and strategize demand and supply. “Management of the supply chain is another example [of a real-time analytics use case]. By monitoring shipments and inventory data, real-time analytics allow companies to quickly fix delays or shortages,” says Chow.

Some of the other ways real-time analytics can help organizations include:

- Feed live data to route planning algorithms in the logistics industry. These algorithms can analyze real-time data to optimize routes and save time by going through traffic patterns on roadways, weather conditions, and fuel consumption.

- Use aggregation of real-time data from fuel-level sensors to resolve fuel issues faced by drivers. These sensors can provide data on fuel level volumes, consumption, and dates of refills.

- Collect real-time data from electronic logging devices (ELD) to study driver behavior and improve it. This data provides valuable insights into driving patterns, enabling fleet managers to implement targeted training and safety measures

Finance

In certain industries, such as commodities trading, market fluctuations require organizations to be agile. Real-time analytics can help in these scenarios by intercepting changes and empowering organizations to adapt to rapid market fluctuations. Financial firms can use real-time analytics to analyze different types of financial data, such as trading data, market prices, and transactional data.

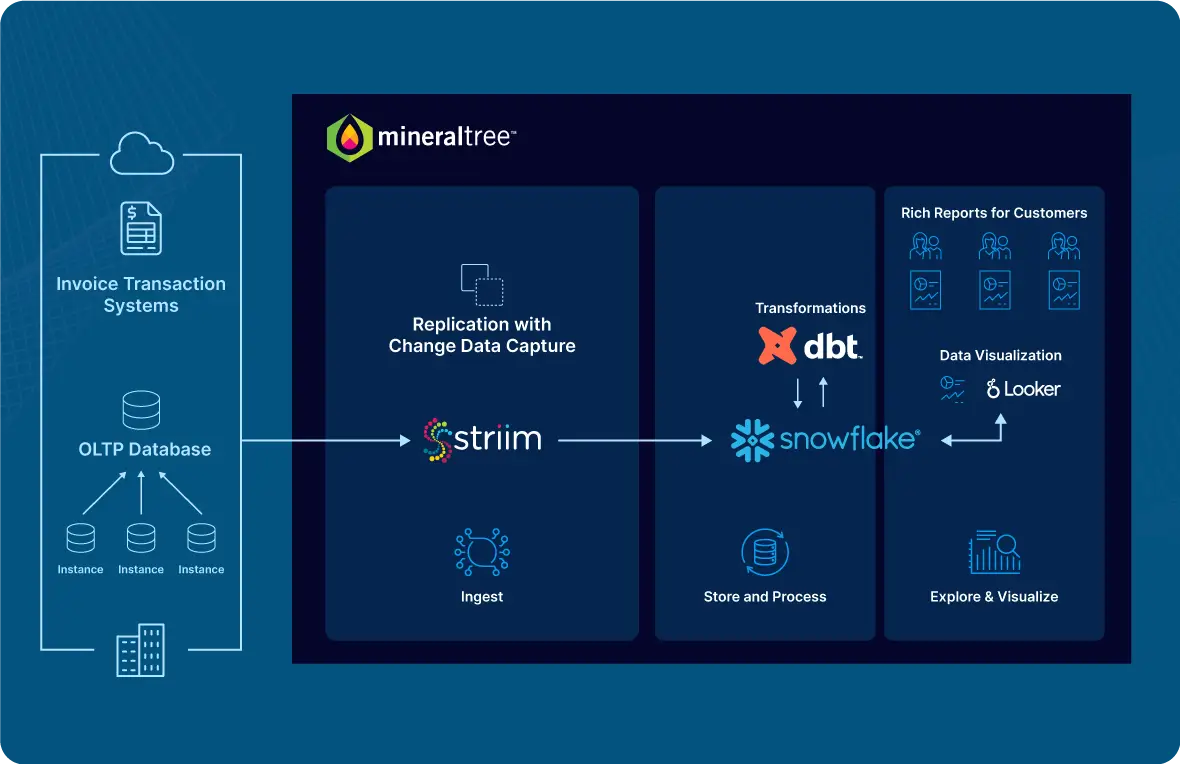

Consider the case of Inspyrus (now MineralTree), a fintech company seeking to improve accounts payable operations for businesses. The company wanted to ensure its users could get a real-time view of their transactional data from invoicing reports. However, their existing stack was unable to support real-time analytics, which meant that it took a whole hour for data updates, whereas some operations could even take weeks. There were also technical issues with moving data from an online transaction processing (OLTP) database to Snowflake in real time.

Use Striim to power your real-time analytics infrastructure

Your real-time analytics infrastructure can be only as good as the tool you use to support it. Striim is a unified real-time data integration and streaming platform that enables real-time analytics that can offer a range of benefits in this regard. It can help you:

- Collect data non-intrusively, securely, and reliably, from operational sources (databases, data warehouses, IoT, log files, applications, and message queues) in real time

- Stream data to your cloud analytics platform of choice, including Google BigQuery, Microsoft Azure Synapse, Databricks Delta Lake, and Snowflake

- Offer data freshness SLAs to build trust among business users

- Perform in-flight data processing such as filtering, transformations, aggregations, masking, enrichment, and correlations of data streams with an in-memory streaming SQL engine

- Create custom alerts to respond to key business events in real time

When seeking a real-time analytics platform, look no further than Striim. Striim, a unified real-time data integration and streaming platform, connects clouds, data, and applications. You can leverage it to connect hundreds of enterprise sources, all while supporting data enrichment, the creation of complex in-flight data transformations with Striim, and more. “Striim uses log-based Change Data Capture (CDC) technology to capture real-time changes from the source database and continuously replicate the data in-memory to multiple target systems, all without disrupting the source database’s operation,” says Chow.

Ready to discover how Striim can help evolve how you process data? Sign up for a demo today.