4 Major Components for Mission-Critical IMC Processing

This is the first blog in a six-part series on making In-Memory Computing Enterprise Grade. Read the entire series:

- Part 1: overview

- Part 2: data architecture

- Part 3: scalability

- Part 4: reliability

- Part 5: security

- Part 6: integration

If you are looking to create an end-to-end in-memory streaming platform that is used by Enterprises for mission critical applications, it is essential that the platform is Enterprise Grade. In a recent presentation at the In-Memory Computing Summit, I was asked to explain exactly what this means, and divulge the best practices to achieving an enterprise-grade, in-memory computing architecture based on what we have learned in building the Striim platform.

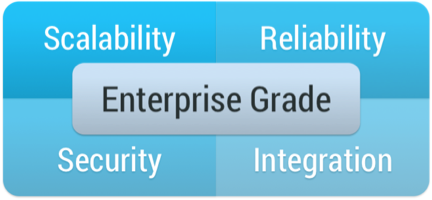

There are four major components to an enterprise-grade, in-memory computing platform: namely scalability, reliability, security and integration.

Scalability is not just about being able to add additional boxes, or spin up additional VMs in Amazon. It is about being able increase the overall throughput of a system to be able to deal with an expanded workload. This needs to take into account not just an increase in the amount of data being ingested, but also additional processing load (more queries on the same data) without slowing down the ingest. You also need to take into account scaling the volume of data you need to hold in-memory and any persistent storage you may need. All of this should happen as transparently as possible without impacting running data flows.

For mission-critical enterprise applications, Reliability is an absolutely requirement. In-memory processing and data-flows should never stop, and should guarantee processing of all data. In many cases, it is also imperative that results are generated once-and-only-once, even in the case of failure and recovery. If you are doing distributed in-memory processing, data will be partitioned over many nodes. If a single node fails, the system not only needs to pick up from where the failed node left off, it also needs to repartition over remaining nodes, recover state, and know what results have been written where.

Another key requirement is Security. The overall system needs an end-to-end authentication and authorization mechanism to protect data flow components and any external touch points. For example, a user who is able to see the end results of processing in a dashboard may not have the authority to query an initial data stream that contains personally identifiable information. Additionally any data in-flight should be encrypted. In-memory computing, and the Striim platform specifically, generally does not write intermediate data to disk, but does transmit data between nodes for scalability purposes. This inter-node data should be encrypted, especially over standard messaging frameworks such as Kafka that could easily be tapped into.

The final Enterprise Grade requirement is Integration. You can have the most amazing in-memory computing platform, but if it does not integrate with you existing IT infrastructure it is a barren data-less island. There are a number of different things to consider from an integration perspective. Most importantly, you need to get data in and out. You need to be able to harness existing sources, such as databases, log files, messaging systems and devices, in the form of streaming data, and write the results of processing to existing stores such as a data warehouse, data lake, cloud storage or messaging systems. You also need to consider any data you may need to load into memory from external systems for context or enrichment purposes, and existing code or algorithms you may have that may form part of your in-memory processing.

You can build an in-memory streaming platform without taking into account any of these requirements, but it would only be suitable for research or proof-of-concept purposes. If software is going to be used to run mission-critical enterprise data flows, it must address these criteria and follow best practices to play nicely with the rest of the enterprise.

Striim has been designed from the ground-up to be Enterprise Grade, and not only meets these requirements, but does so in an easy-to-use and robust fashion.

In subsequent blogs I will expand upon these ideas, and provide a framework for ensuring your streaming integration and analytics use cases make the grade.