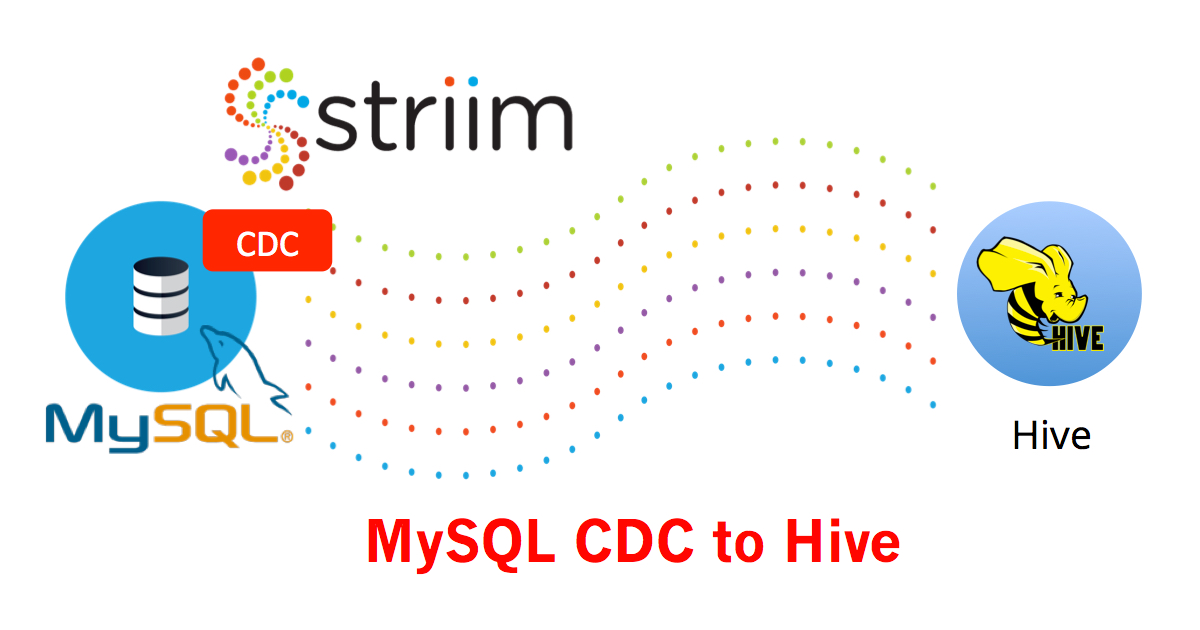

Enterprise databases, such as MySQL, contain the operational data that organizations need to make critical decisions. Striim’s MySQL CDC to Hive solution delivers that data in real time directly to Apache Hive to allow users to take advantage of Hive’s data query and analysis capabilities on Hadoop. Continuous data feeds help Hive users to work with large data sets efficiently, and realize true value from both their analytics solutions and their growing volumes of data.

Achieving this goal, however, can be thwarted by a lack of access to comprehensive and up-to-the-millisecond information that gives an accurate picture of a business at any point in time. This essential transactional and operational data stored in on-premises databases – including MySQL –needs to be delivered in real time and in the right format to allow Hive to quickly query it, analyze it, and deliver insight to the business.

Moving data using traditional ETL methods falls short of what is required. The latency introduced means that, even if all the data is transferred, it is already obsolete by the time it is available for data analysts to query and analyze.

There is an alternative: change data capture (CDC). Going beyond batch ETL, MySQL CDC to Hive is non-intrusive and moves and processes only the data that has changed. Using Striim’s streaming data integration platform, organizations can ensure that high-volumes of data are not just moved, but also processed and enriched before delivery – in milliseconds – to Hive.

Low-impact, real-time data ingestion from relational databases using MySQL CDC to Hive is vital to performing the powerful analysis required to deliver the insights and efficiencies that ensure businesses can meet the needs of their customers and remain competitive. When data is processed and enriched in-flight – without added latency – organizations gain insights faster and easier, while better managing their limited data storage capacity.

Striim delivers built-in, exactly once processing (E1P) in addition to the security, high availability and scalability required of an enterprise-grade solution. MySQL CDC to Hive gives organizations zero-downtime, zero-data-loss data movement to support offline, online, and phased migrations.

Low-impact MySQL CDC to Hive is just one of the CDC solutions for relational databases that Striim offers. Striim also supports CDC for Oracle, Microsoft SQL Server, PostgreSQL, MongoDB, HPE NonStop SQL/MX, HPE NonStop SQL/MP, HPE NonStop Enscribe, and MariaDB.

Learn more about using CDC to ingest high-velocity, high-volume data, please visit our Change Data Capture for Databases page.

Our technologists can provide you with a closer look at MySQL CDC to Hive. We invite you to schedule a demo.