GCS Writer

Google Cloud Storage is a cloud storage platform designed to store large, unstructured data sets. It provides unified file storage for data. You can use the GCS Writer to write data to a Google Cloud Storage bucket.

Summary

APIs used/data supported | Google Cloud Storage APIs: Put, Create and List bucket |

Supported formatters | Avro, DSV, JSON, Parquet, XML. See Supported writer-formatter combinations. |

Supported sources | All sources supported by Striim such as Oracle, SQL Server, MySQL, Amazon S3, or Azure Storage, that generate the following event types: JSONNodeEvent, ParquetEvent, user-defined, WAEvent, XMLNodeEvent. Unsupported sources include those that generate the AvroEvent type. See Readers overview. |

Security and authentication | GCS Writer authenticates its connection to Google Cloud Storage using a Google service account key. GCS Writer supports private endpoints. See Using Private Service Connect with Google Cloud adapters. |

Resilience / recovery |

|

Performance | Supports parallel threads to increase throughput to the target. See Creating multiple writer instances (parallel threads). |

Programmability |

|

Metrics and auditing | Key metrics available through Striim monitoring. See GCS Writer monitoring metrics. |

Typical use case and integration

A typical use case is to use Google Cloud Storage as a centralized repository that stores, processes, and secures files of any format. Striim is used to process and store the newly generated event from the source.

GCS Writer overview

Google Cloud Storage is binary large object (blob) storage from Google, and is functionally similar to Amazon S3 or Azure Blob Storage. Similar to Striim's S3 Writer, the GCS Writer stages files locally before they are uploaded to GCS. Like other blob storage, GCS doesn't allow appending to a file; a file is uploaded as is.

The local staging area is located at .striim/<appname>/<bucket-name>/<user-provided-folder-path>/<file>. Once a file is uploaded, it is deleted from the local staging area.

Google supports a number of different methods for uploading to cloud storage (see Uploads and downloads). GCS Writer uses the Upload objects from memory method. Once GCS Writer stages the file locally, it reads the contents at once in a byte array and uploads them to the bucket. This adapter requires you to provide:

A Google service account key (which you can download in JSON format from your Google Cloud account).

A project ID to which the Google service account has read/write permissions to an existing GCS bucket.

Initial Google Cloud Storage setup

Before you can use GCS Writer in a Striim application, you must perform initial set up steps. These include creating a custom Google Cloud Storage role with permissions on the bucket, assigning the role to a service account you create, and downloading the service account key needed to authenticate the GCS Writer.

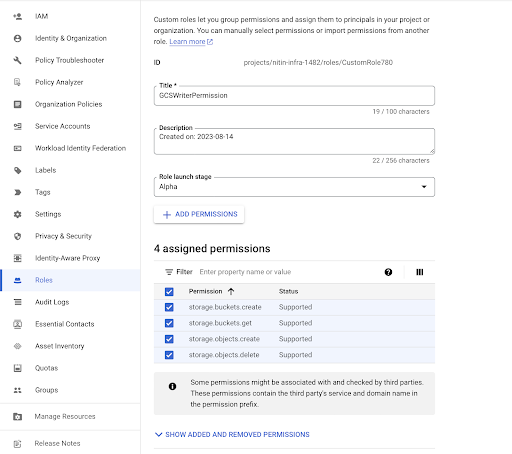

Create a custom Google Cloud Storage role with the following permissions on the bucket and assign it to your service account:

storage.buckets.createstorage.buckets.getstorage.objects.createstorage.objects.delete

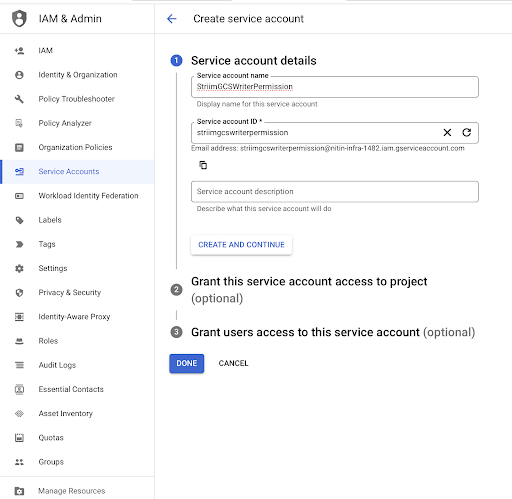

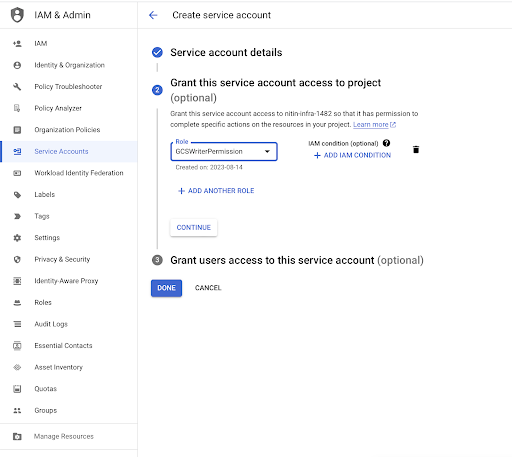

Create a service account and assign the above role to it (see Service accounts overview).

Create a service account.

Assign the role to the service account..

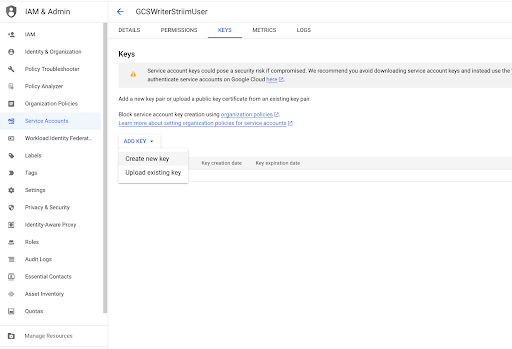

Download the service account key needed to authenticate the GCS Writer.

Configuring Striim to work with Google Cloud Storage

All clients and drivers required by GCS Writer are bundled with Striim. No additional setup is required.

Create a GCS Writer application

Create a GCS Writer application using a wizard

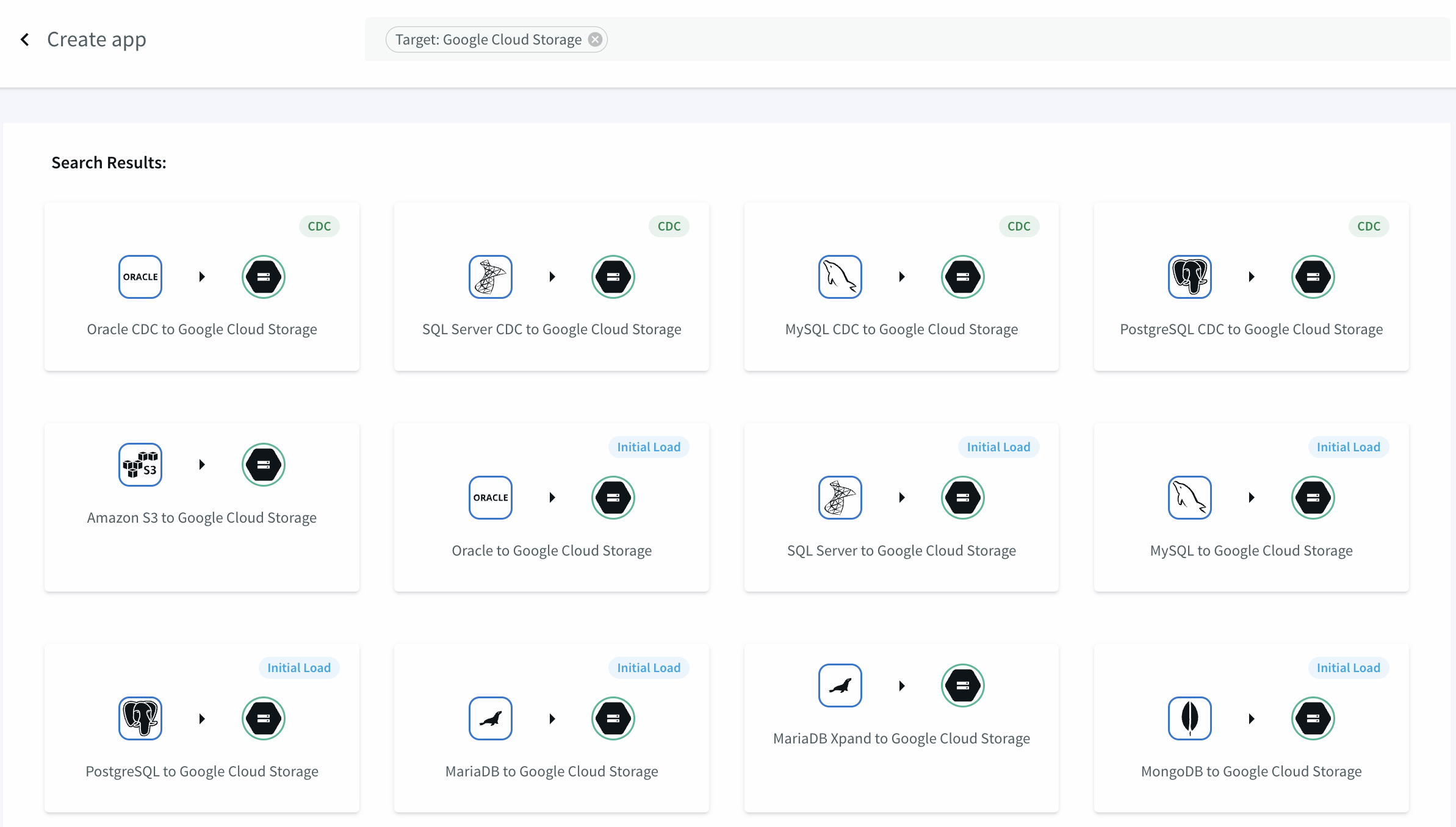

In Striim, select Apps > Create New, enter Target: Google Cloud Storage in the search bar, click the kind of application you want to create, and follow the prompts. See Creating apps using wizards for more information.

Create a GCS Writer application using TQL

CREATE APPLICATION testGCS; CREATE SOURCE PosSource USING FileReader ( wildcard: 'PosDataPreview.csv', directory: 'Samples/PosApp/appData', positionByEOF:false ) PARSE USING DSVParser ( header:Yes, trimquote:false ) OUTPUT TO PosSource_Stream; CREATE CQ PosSource_Stream_CQ INSERT INTO PosSource_TransformedStream SELECT TO_STRING(data[1]) AS MerchantId, TO_DATE(data[4]) AS DateTime, TO_DOUBLE(data[7]) AS AuthAmount, TO_STRING(data[9]) AS Zip FROM PosSource_Stream; CREATE TARGET GCSOut USING GCSWriter ( bucketName: 'mybucket', objectName: 'myobjectname', serviceAccountKey: 'conf/myproject-ec6f8b0e3afe.json', projectId: 'myproject' ) FORMAT USING DSVFormatter () INPUT FROM PosSource_TransformedStream; END APPLICATION testGCS;

Create a GCS Writer application using the Flow Designer

GCS Writer programmer's reference

GCS Writer properties

property | type | default value | notes |

|---|---|---|---|

Bucket Name | String | The GCS bucket name. If it does not exist, it will be created (provided Location and Storage Class are specified). See Setting output names and rollover / upload policies for advanced options. Note the limitations in Google's Bucket and Object Naming Guidelines. Note particularly that bucket names must be unique not just within your project or account but across all Google Cloud Storage accounts. See Setting output names and rollover / upload policies for advanced Striim options. | |

Client Configuration | String | Optionally, specify one or more of the following property-value pairs, separated by commas, to override Google's defaults (see Class RetrySettings):

| |

Compression Type | String | Set to | |

Data Encryption Key Passphrase | encrypted password | ||

Encryption Policy | String | ||

Folder Name | String | Optionally, specify a folder within the specified bucket. If it does not exist, it will be created. See Setting output names and rollover / upload policies for advanced options. | |

Location | String | The location of the bucket, which you can find on the bucket's overview tab (see Bucket Locations). | |

Object Name | String | The base name of the files to be written. See Google's Bucket and Object Naming Guidelines. See Setting output names and rollover / upload policies for advanced Striim options. | |

Parallel Threads | Integer | ||

Partition Key | String | This property has been deprecated and will be removed in a future release.. | |

Private Service Connect Endpoint | String | If using Private Service Connect with Google Cloud Storage, specify the endpoint created in the target Virtual Private Cloud, as described in Private Service Connect support for Google cloud adapters. | |

Project Id | String | The Google Cloud Platform project for the specified bucket. | |

Roll Over on DDL | Boolean | True | Has effect only when the input stream is the output stream of a CDC reader source. With the default value of True, rolls over to a new file when a DDL event is received. Set to False to keep writing to the same file. |

Service Account Key | String | The path (from root or the Striim program directory) and file name to the .json credentials file downloaded from Google (see Service Accounts). This file must be copied to the same location on each Striim server that will run this adapter, or to a network location accessible by all servers. The associated service account must have the Storage Legacy Bucket Writer role for the specified bucket. | |

Storage Class | String | The storage class of the bucket, which you can find on the bucket's overview tab (see Bucket Locations). | |

Time Zone Offset in File Name | String | ||

Upload Policy | String | eventcount:10000, interval:5m | The upload policy may include eventcount, interval, and/or filesize (see Setting output names and rollover / upload policies for syntax). Cached data is written to GCS every time any of the specified values is exceeded. With the default value, data will be written every five minutes or sooner if the cache contains 10,000 events. When the app is undeployed, all remaining data is written to GCS. |

This adapter has a choice of formatters. See Supported writer-formatter combinations for more information.

GCS Writer runtime considerations

GCS Writer monitoring metrics

The following monitoring metrics are published by GCS Writer:

External I/O Latency: Time duration to complete I/O.

Last Checkpointed Position: Value of the last checkpointed position.

Last I/O Size: Size of the last upload in bytes.

Last I/O Time: Timestamp of the last I/O.

Last Uploaded Filename: Name of the last uploaded file.

Total Events In Last Upload: Total number of events in the last upload.

Optimizing GCS Writer performance

GCS Writer's Parallel Threads property may in some circumstances allow you to improve performance. For more information, see Creating multiple writer instances (parallel threads). Each instance runs in its own thread, increasing throughput. If your application were deployed to multiple servers, each server would run multiple instances, so the total number of instances would be the Parallel Threads value times the number of servers. Use Parallel Threads only when the target is not able to keep up with incoming events (that is, when its input stream has backpressure). See Understanding and managing backpressure. Otherwise, the overhead imposed by additional threads could reduce the application's performance.

Troubleshooting GCS Writer

This topic describes errors you may see when using GCS Writer, and possible resolutions.

Exception | Resolution |

|---|---|

com.google.cloud.storage.StorageException: gcsreaderaccesslevelzero@xyz.gserviceaccount.com does not have access to the Google Cloud Storage object |

|